Google parent Alphabet is riding a wave of investor confidence as its stock hits record highs, fueled by Wall Street's growing enthusiasm for the company's custom AI chip strategy. The momentum comes as Google's Tensor Processing Units (TPUs) gain traction beyond the company's own walls, with reports suggesting Meta is considering a major shift to Google's AI hardware.

This isn't just another tech stock rally - it's a fundamental reassessment of Google's position in the AI infrastructure arms race. Alphabet shares have doubled in value in seven months to $3.8 trillion as markets have grown more confident in the search giant's ability to fend off the threat from ChatGPT owner OpenAI.

The catalyst for this latest surge appears to be Google's aggressive push to commercialize its TPU technology. According to multiple reports, Meta is considering using Google's tensor processing units, or TPUs, in its data centers in 2027, and may also rent TPUs from Google's cloud unit next year, according to The Information. This would mark a significant departure from Meta's current reliance on Nvidia hardware and represent a major validation of Google's chip strategy.

Read also - Nvidia's 'We're Not Enron' Memo Accidentally Revealed The Real Risk

Google's latest TPU iteration, Ironwood, represents the company's most powerful and energy-efficient custom silicon to date, offering more than 4X better performance per chip for both training and inference workloads compared to Google's previous generation, according to Google Cloud. The chip offers more than 4X better performance per chip for both training and inference workloads compared to Google's previous generation, making it particularly well-suited for the current industry shift from training frontier models to powering high-volume, low-latency AI inference.

What's particularly interesting about this development is how it's playing out in the broader market. When reports of Meta's potential TPU adoption surfaced, Nvidia shares fell as much as 7% before recovering to trade down 4%, while Alphabet shares climbed for a third consecutive day. The market reaction suggests investors see Google's TPU strategy as a credible alternative to Nvidia's dominance in AI hardware.

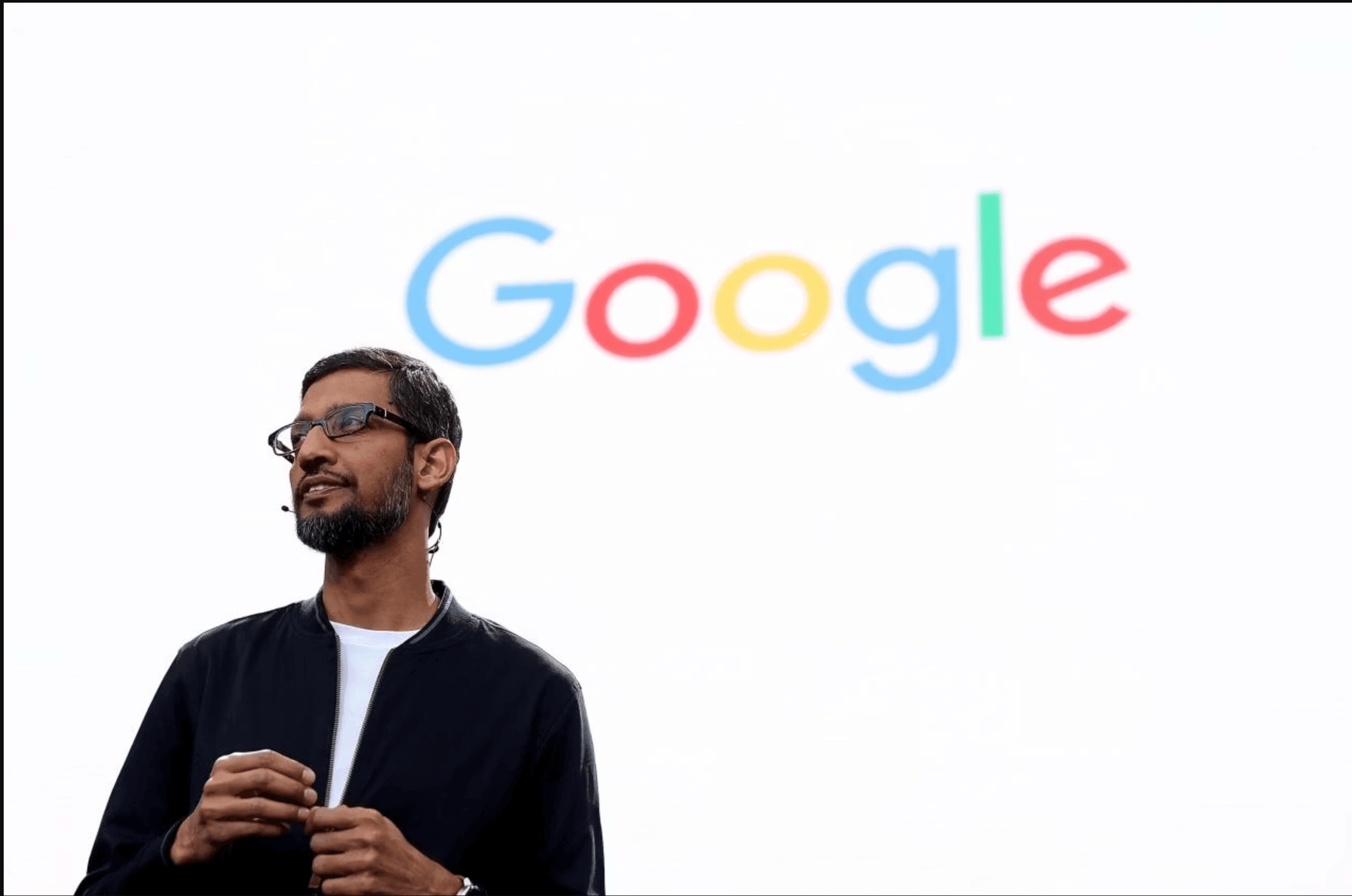

Google CEO Sundar Pichai has acknowledged the "elements of irrationality" in the current AI investment boom while maintaining that the underlying technology transformation is profound.

"We can look back at the internet right now. There was clearly a lot of excess investment, but none of us would question whether the internet was profound," Pichai told the BBC. "I expect AI to be the same. So I think it's both rational and there are elements of irrationality through a moment like this."

Read also - Tech CEOs Found One Brilliant Way to Make Themselves Irreplaceable to AI

The TPU story represents Google's long-term bet on domain-specific architecture. Unlike Nvidia's GPUs, which were originally designed for graphics processing and later adapted for AI workloads, TPUs are application-specific integrated circuits (ASICs) designed from the ground up for neural networks. This specialization allows them to excel at managing massive calculations while minimizing the internal time required for data to shuttle across the chip.

Google's approach to TPU commercialization appears to be gaining momentum beyond just Meta. Anthropic plans to expand its use of Google Cloud technologies, including access to up to one million TPUs, in a deal worth tens of billions of dollars, and according to some Google Cloud executives, the Meta deal could generate revenue equal to as much as 10% of Nvidia's current annual data center business.

This isn't Google's first attempt to challenge Nvidia's dominance, but it might be the most credible. The company launched its first-generation TPU in 2018, initially designed for internal use within its cloud computing business. Since then, Google has launched more advanced versions specifically engineered to handle artificial intelligence workloads, with each generation showing significant performance improvements.

The timing of this TPU push aligns with broader industry trends. As AI models become more sophisticated and inference workloads grow exponentially, companies are looking for more efficient alternatives to traditional GPU architectures. Google's TPUs, with their specialized features like the matrix multiply unit (MXU) and proprietary interconnect topology, offer potential advantages for specific AI frameworks and high-throughput matrix operations central to large language models.

Nvidia hasn't taken this challenge lightly. The company took the unusual step of posting on X to defend its position, stating: "We're delighted by Google's success - they've made great advances in AI, and we continue to supply to Google. NVIDIA is a generation ahead of the industry - it's the only platform that runs every AI model and does it everywhere computing is done."

For Google, the TPU strategy represents more than just a hardware play, it's about building a complete AI ecosystem. The company's full-stack approach includes not just the chips themselves but also the software infrastructure, with tools like the XLA compiler and JAX framework designed to work seamlessly with TPU architecture.

As the AI infrastructure battle intensifies, Google's stock performance suggests investors are betting that the company's decade-long investment in custom silicon is finally paying off. Whether TPUs can truly challenge Nvidia's ecosystem dominance remains to be seen, but for now, Wall Street appears convinced that Google has found its footing in the AI hardware race.