Google is readying a model-switching system for Gemini Live that would let users swap between seven different AI voices, including a "Thinking" variant with enhanced reasoning. Two models are already at Release Candidate 2 stage ahead of next week's I/O 2026.

Forbes contributor Paul Monckton discovered a hidden model selector in Google App version 17.18.22. The menu, gated behind a server-side flag, reveals seven previously unreported AI model options for Gemini Live conversations, including codenames "Capybara," "Nitrogen," and a specialized personalization variant. The full list: Default, A2A_Rev25_RC2, A2A_Rev25_RC2_Thinking, A2A_Rev23_P13n, A2A_Nitrogen_Rev23, A2A_Capybara, A2A_Capybara_Exp, and A2A_Native_Input.

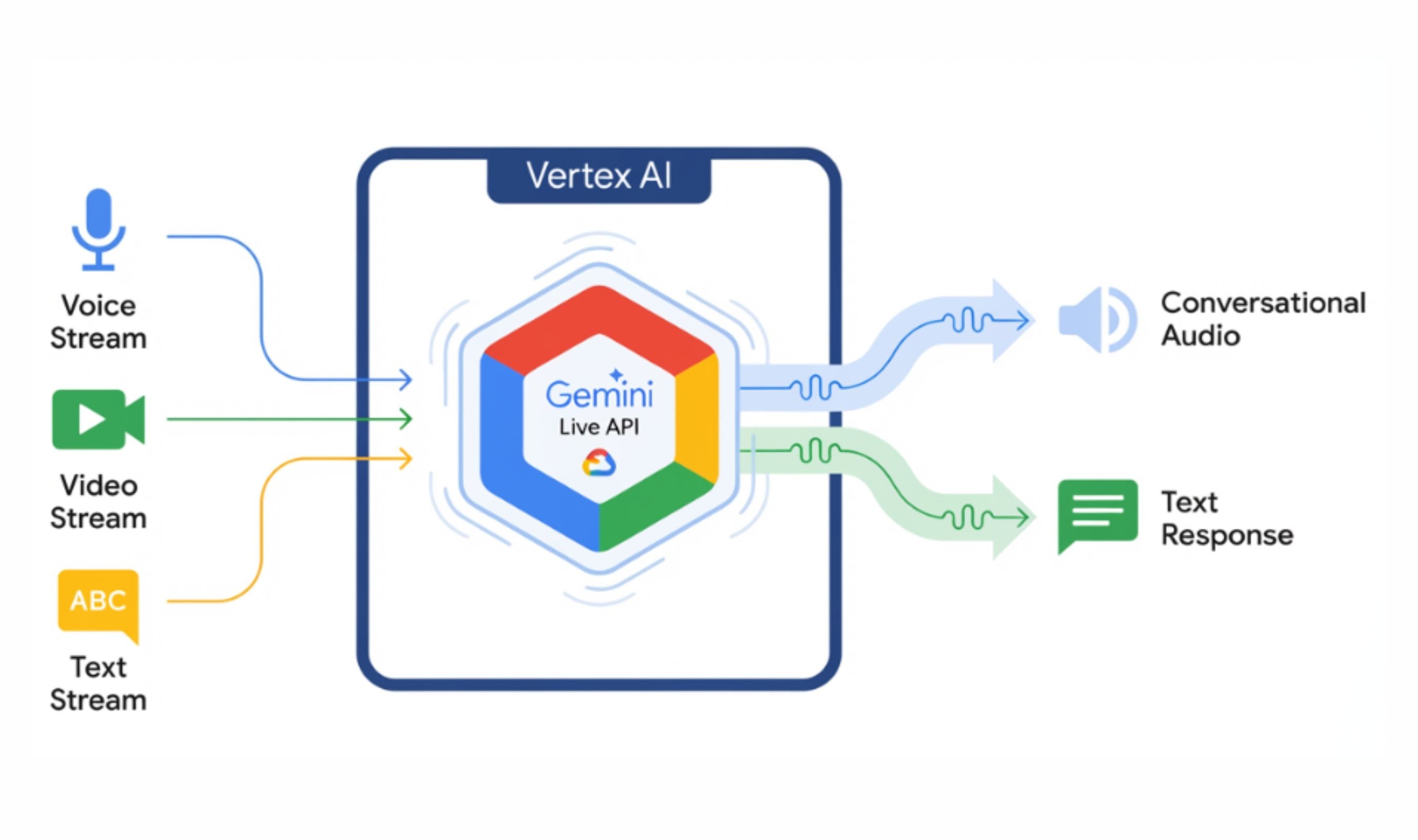

"A2A" most likely stands for Audio-to-Audio, Google's term for models that process speech and audio directly rather than converting them to text first. Two of these, A2A_Rev25_RC2 and A2A_Rev25_RC2_Thinking, appeared overnight on May 8, signaling they're nearing production readiness.

Monckton ran twelve tests on each model and confirmed they produce measurably different responses. Four models accessed the user's location to provide live weather data; three could not. The "Capybara" model identified itself as "Gemini 3.1 Pro" rather than the standard Flash Live model Google typically uses for Gemini Live chats. The P13n (personalization) variant stood out. When asked for the current date and time, it asked which time zone the user was in rather than assuming.

It also remembered personal details shared earlier and used them naturally later in the conversation, something the current default Gemini Live model refused to do. The model list is delivered by Google's servers, meaning the company can add or remove models without an app update. That architecture is ideal for a live demo at Google I/O 2026, which begins May 19.

Separately, a Reddit user gained early access to "Gemini Omni," Google's next-generation AI video model. The user received a pop-up prompting them to "create with Gemini Omni," described as Google's "new video model." Metadata suggests Omni is an extension of Google's Veo foundation.

Tests showed Omni handling complex reasoning prompts, a professor writing a trigonometric proof on a chalkboard, with lifelike results. A more complex dinner scene revealed common AI glitches, with spaghetti appearing out of thin air on empty plates. The user's attempt to run the infamous "Will Smith eating spaghetti" benchmark was blocked by guardrails. The computing cost is steep. With just two video generation requests on the Google AI Pro plan, the user hit 86% of their daily usage limit.

Google has recently been spotted adding explicit usage limits for Gemini, and the resource-heavy Omni model appears to be the reason.

Both discoveries point to a busy I/O 2026. Google is building the backend for a multi-model Gemini Live experience while simultaneously pushing into AI video generation with Omni. The infrastructure for switchable voice models is already in place, and the RC2 label on two entries suggests they're past the experimental phase.

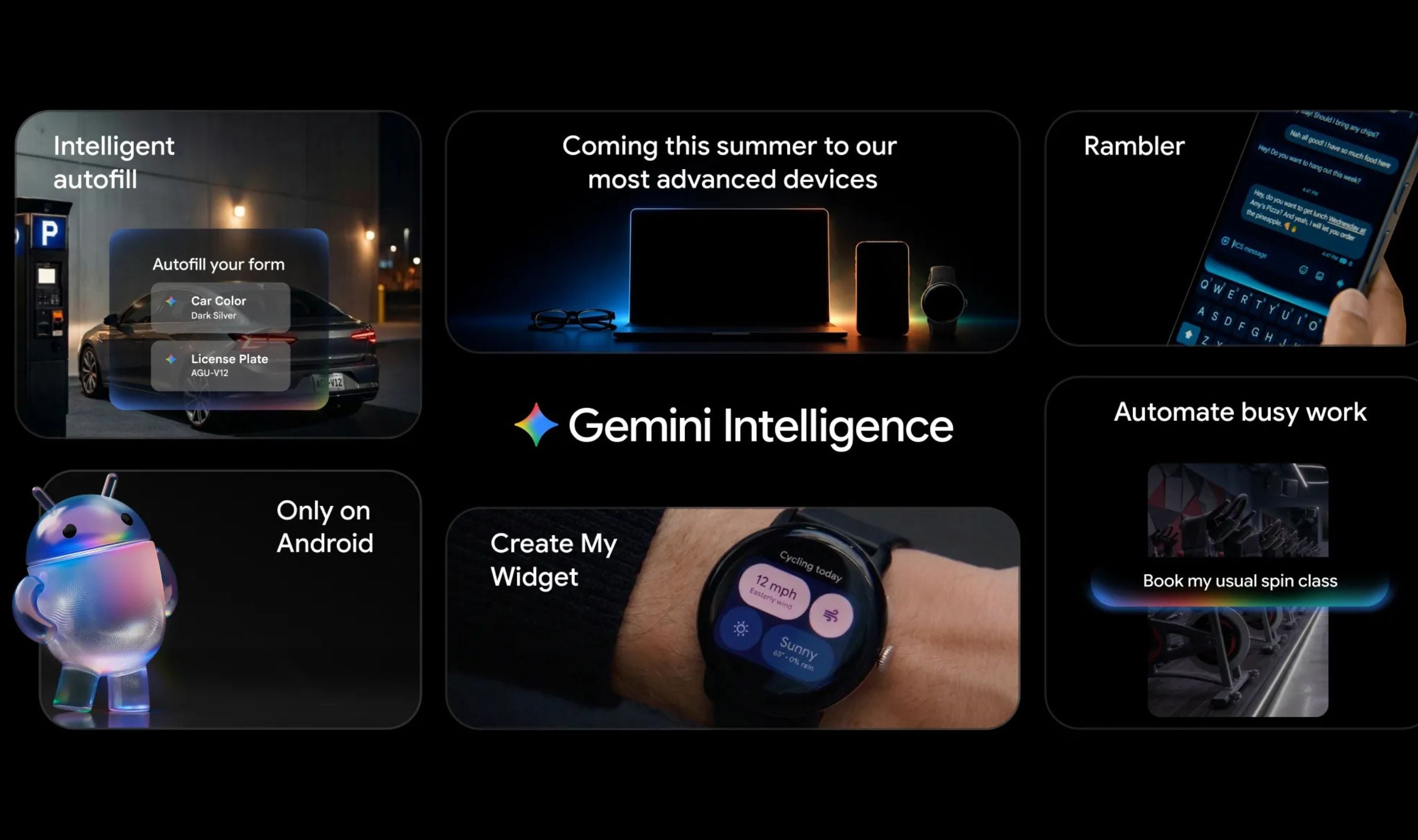

Gemini Intelligence, announced this week, adds another layer. The system will automate tasks across apps and the web, with Chrome auto-browse arriving in June.

Gemini Intelligence begins rolling out to Pixel and select Samsung Galaxy phones this summer.