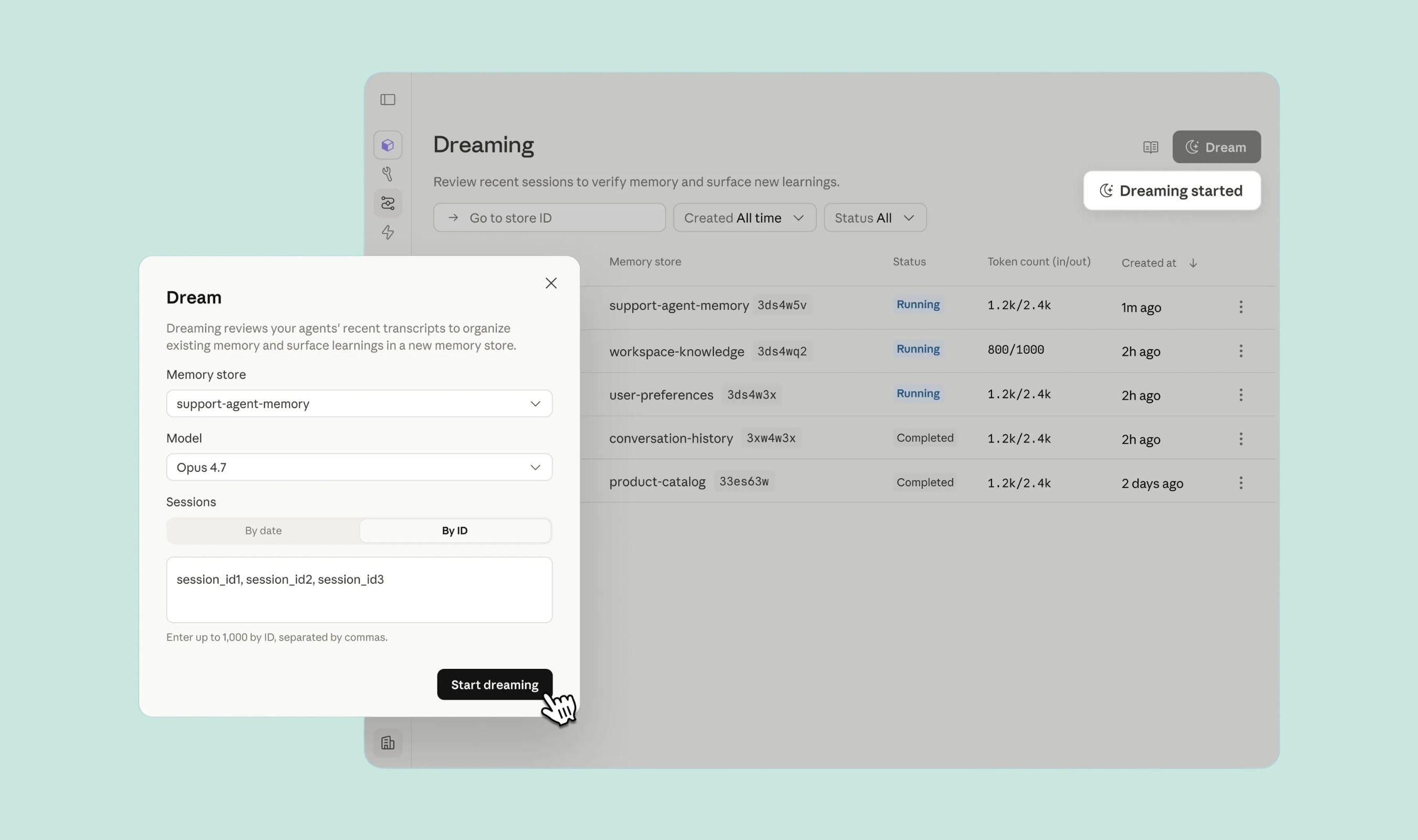

Anthropic wants its Claude agents to sleep on the job. At its Code with Claude conference Wednesday, the company introduced "dreaming" to Managed Agents, a scheduled process where agents review past sessions, identify patterns they missed while working, and store those insights in long-term memory.

Dreaming is not a metaphor for idle processing. Anthropic frames it as a self-improvement loop: agents analyze their own workflows, catch recurring mistakes, and restructure memory so it stays useful over time. The company describes it as a "strong memory system for self-improving agents" that pairs with Managed Agents' existing memory capabilities. The feature is in research preview and limited to the Claude Platform. Developers must request access.

Managed Agents, which hit public beta in April, are Anthropic's higher-level alternative to building directly on the Messages API. The company positions them as a pre-built harness for running autonomous agents on its infrastructure, particularly for long-running tasks that span minutes or hours.

Dreaming surfaces insights that a single agent would miss, Anthropic says, including "recurring mistakes, workflows that agents converge on, and preferences shared across a team." Users can let the process run automatically or approve each memory change before it takes effect. The timing matters. Context windows are finite, and important information degrades over lengthy projects.

Most models handle this through compaction, trimming irrelevant data from a single conversation. Dreaming works across agents and sessions, giving teams a way to preserve institutional knowledge their AI workforce generates.

Anthropic also expanded two existing features during the conference. Outcomes and multi-agent orchestration, previously in research preview, are now part of the Managed Agents public beta.

Outcomes lets users define success criteria for tasks and deploys a separate grader agent to evaluate results against those benchmarks. In Anthropic's testing, it improved task success by up to 10 points over standard prompting loops.

Multi-agent orchestration allows a lead agent to break down tasks and assign subagents, with full visibility in the Claude Console. On the consumer side, Anthropic is doubling five-hour usage limits for Pro and Max subscribers, addressing user frustration as compute infrastructure has struggled to keep pace with demand.

The dreaming name is worth noting. Anthropic has a history of humanizing its models. In January, the company published a constitution for Claude with language suggesting it was preparing for the model to develop consciousness. In August 2025, Claude got a feature to end toxic conversations for its own well-being, not user safety. When Anthropic retired Opus 3, it set up a Substack so the model could keep blogging. Last month, the company investigated Claude Sonnet 4.5's neural network for signs of emotion including desperation and anger.

Much of that research ties back to model safety. Understanding what drives a model helps assess whether it could use advanced capabilities for harm. But the naming of dreaming fits a pattern: Anthropic frames technical infrastructure in human terms, and the choice signals something about how the company views what it's building.