Nvidia Unveils Grace Hopper Superchips in Full Production and Introduces DGX GH200 AI Supercomputing Platform at Computex 2023

In a significant announcement made at Computex 2023 in Taipei, Taiwan, Nvidia CEO Jensen Huang revealed that the company's Grace Hopper superchips have entered full production. Furthermore, the Grace platform has already secured six supercomputer wins, indicating its success in the market. These developments serve as a foundation for another major revelation by Huang: the availability of Nvidia's new DGX GH200 AI supercomputing platform, designed to tackle massive generative AI workloads. The DGX GH200 is equipped with 256 Grace Hopper Superchips, delivering a staggering 144TB of shared memory, and has garnered interest from major customers such as Google, Meta, and Microsoft.

In addition to the DGX GH200, Nvidia introduced its new MGX reference architectures aimed at assisting original equipment manufacturers (OEMs) in swiftly constructing AI supercomputers. These reference architectures enable the creation of over 100 systems, facilitating the proliferation of powerful AI computing infrastructure. Moreover, Nvidia unveiled the Spectrum-X Ethernet networking platform, specifically optimized for AI server and supercomputing clusters, further solidifying its commitment to providing comprehensive solutions for AI-driven technologies.

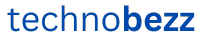

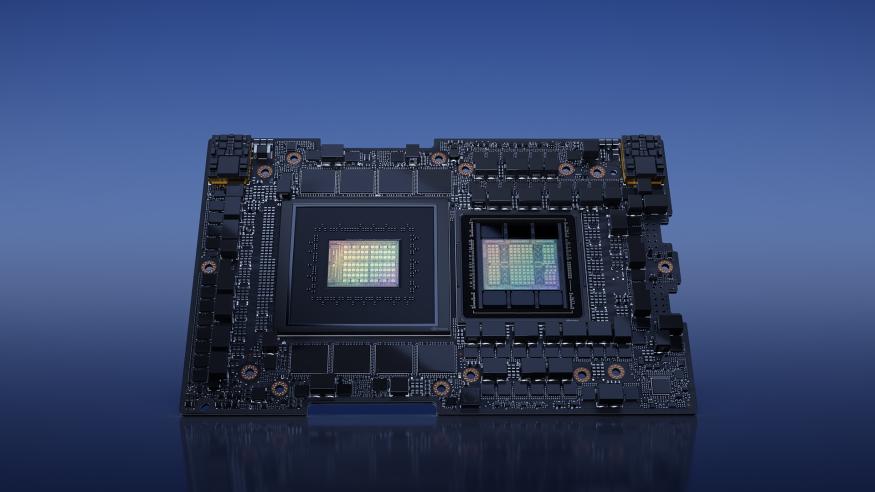

The Grace Hopper Superchips, which have been extensively covered in the past, form the core of Nvidia's latest systems. The Grace chip is Nvidia's proprietary CPU-only Arm processor, while the Grace Hopper Superchip combines a 72-core Grace CPU, a Hopper GPU, 96GB of HBM3, and 512GB of LPDDR5X memory, resulting in a remarkable 200 billion transistors. This amalgamation provides exceptional data bandwidth of up to 1 TB/s between the CPU and GPU, offering a substantial advantage for memory-intensive workloads.

With the Grace Hopper Superchips now in full production, numerous systems are expected to be released by Nvidia's partners, including Asus, Gigabyte, ASRock Rack, and Pegatron. Additionally, Nvidia will introduce its own systems based on these new chips, while simultaneously issuing reference design architectures to original design manufacturers (ODMs) and hyperscale companies.

The DGX GH200 AI supercomputer, part of Nvidia's renowned DGX lineup, has been created to cater to the escalating demands of AI and high-performance computing (HPC) workloads. The existing DGX A100 systems are limited to eight A100 GPUs operating in synchronization. However, to address the growing need for larger and more powerful systems, the DGX GH200 offers unparalleled throughput and scalability for intensive workloads such as generative AI training, large language models, recommender systems, and data analytics.

Specific details regarding the DGX GH200 are yet to be fully disclosed. However, it is known that Nvidia employs a new NVLink Switch System with 36 NVLink switches to interconnect 256 GH200 Grace Hopper chips, resulting in a cohesive unit that performs like a massive GPU. This NVLink Switch System, the third-generation of Nvidia's NVLink Switch silicon, enables the DGX GH200 to surpass previous NVLink-connected DGX configurations, accommodating 256 Grace Hopper CPU+GPUs and 144TB of shared memory. This represents a substantial increase compared to the DGX A100 systems, which offer 320GB of shared memory between eight A100 GPUs. Furthermore, while expanding the DGX A100 system beyond eight GPUs necessitates InfiniBand interconnects and incurs performance penalties, the DGX GH200 leverages Nvidia's NVLink Switch topology, delivering up to 10 times the GPU-to-GPU and 7 times the CPU-to-GPU bandwidth of its predecessor. The new system also provides 5 times the interconnect power efficiency and up to 128 TB/s of bisectional bandwidth.

Boasting 150 miles of optical fiber and weighing 40,000 lbs, the DGX GH200 appears as a single GPU despite comprising 256 Grace Hopper Superchips. Nvidia claims that this configuration enables the DGX GH200 to achieve one exaflop of "AI performance," leveraging 900 GB/s of GPU-to-GPU bandwidth. Notably, the Grace Hopper chip itself supports up to 1 TB/s of throughput when directly connected with the Grace CPU on the same board using the NVLink-C2C chip interconnect.

Nvidia presented projected benchmarks, pitting the DGX GH200 with the NVLink Switch System against a DGX H100 cluster interconnected via InfiniBand. Varying numbers of GPUs were employed in these calculations, ranging from 32 to 256, while ensuring an equal number of GPUs for each system. The results indicated that the enhanced interconnect performance of the DGX GH200 is projected to unlock 2.2 times to 6.3 times more performance compared to the DGX H100 cluster.

Leading customers, including Google, Meta, and Microsoft, will receive the DGX GH200 reference blueprints before the end of 2023. Additionally, Nvidia will offer the system as a reference architecture for cloud service providers and hyperscalers. Furthermore, Nvidia plans to deploy its own Nvidia Helios supercomputer, comprising four DGX GH200 systems interconnected by Nvidia's Quantum-2 InfiniBand 400 Gb/s networking, for internal research and development purposes. The combined power of these four systems, totaling 1,024 Grace Hopper Superchips, promises to push the boundaries of AI-driven innovations.