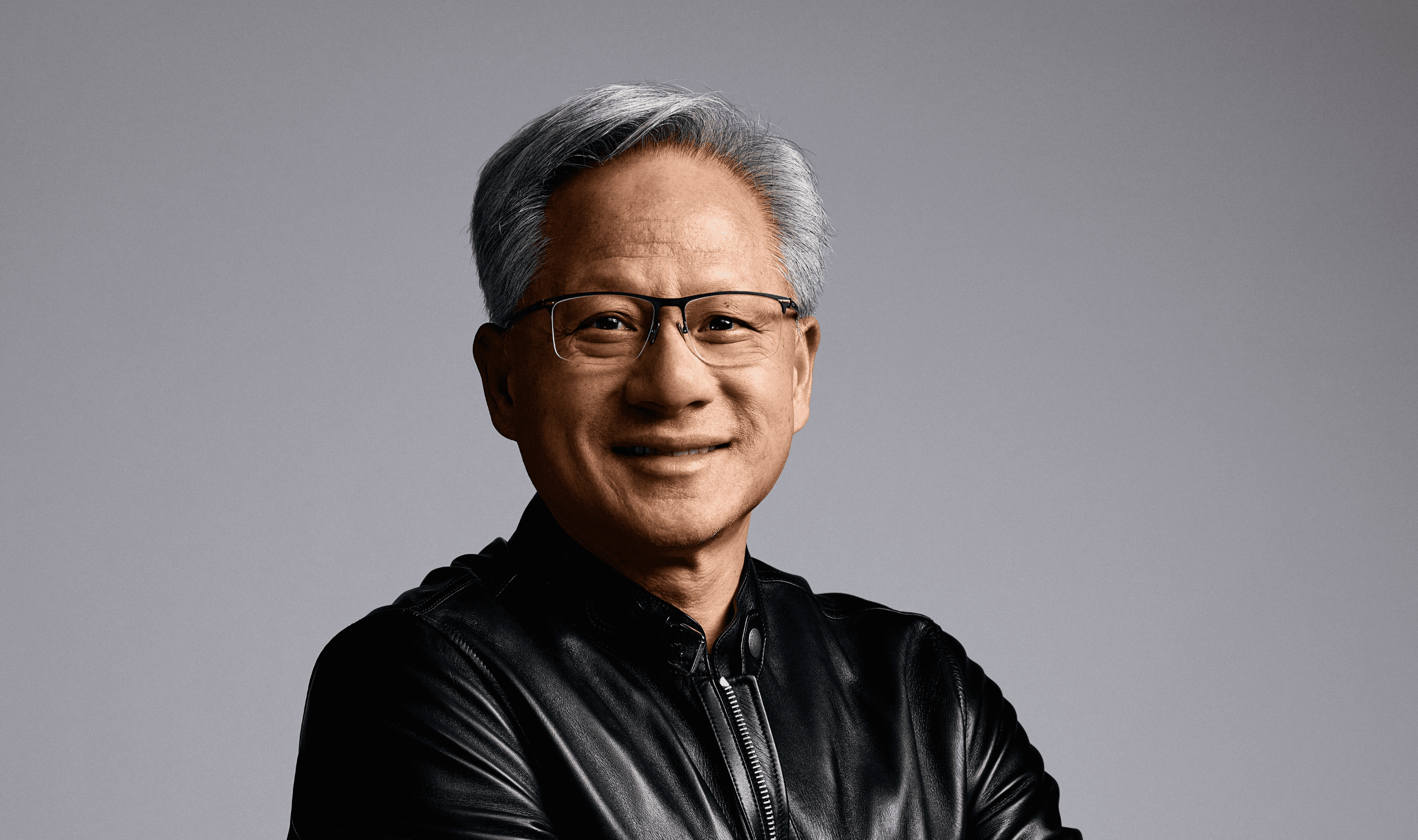

A new inference computing platform from Nvidia will incorporate chips from startup Groq when it debuts at the company's GTC developer conference in San Jose next month, according to people familiar with the plans. The system targets "inference" computing, where trained AI models generate responses to user queries rather than undergoing initial training.

OpenAI has expressed dissatisfaction with how quickly Nvidia's current hardware handles certain ChatGPT tasks, including software development and AI-to-software communication.

The ChatGPT maker needs new hardware that could eventually cover about 10% of its inference computing requirements, one source told Reuters earlier this month.

Before turning to Nvidia's upcoming solution, OpenAI held discussions with chip startups Cerebras and Groq about supplying processors for faster inference. Those talks ended when Nvidia secured a $20 billion licensing agreement with Groq, effectively blocking OpenAI's alternative hardware options.

The move comes alongside Nvidia's broader financial commitment to OpenAI. In September, the chipmaker announced plans to invest up to $100 billion in the AI startup as part of a deal that granted Nvidia equity while providing OpenAI capital to purchase advanced chips.

Nvidia's stock gained 2.3% in recent trading following reports of the new inference platform, adding to a previous day's increase of over one percent. Shares have risen more than 40% in the past year as investors anticipate continued spending on AI infrastructure.

The Philadelphia Semiconductor Index increased 0.8% on the day of the report, compared to a 0.4% gain the previous trading session according to Reuters data.